Everyone is building AI agents.

Half of them shouldn’t be.

That’s not a moral judgment—it’s a postmortem. Over the past year, I’ve watched teams bolt “agentic” features onto products that were never designed to survive autonomy. They didn’t gain leverage. They gained invoices, edge cases, and long Slack threads that start with “Does anyone know why this ran?”

Here’s the kicker: AI agents do work. They’re powerful, genuinely transformative, and the most exciting shift in applied AI since transformers went mainstream.

I love agents.

I also think 90% of teams shouldn’t touch them yet.

Both things are true.

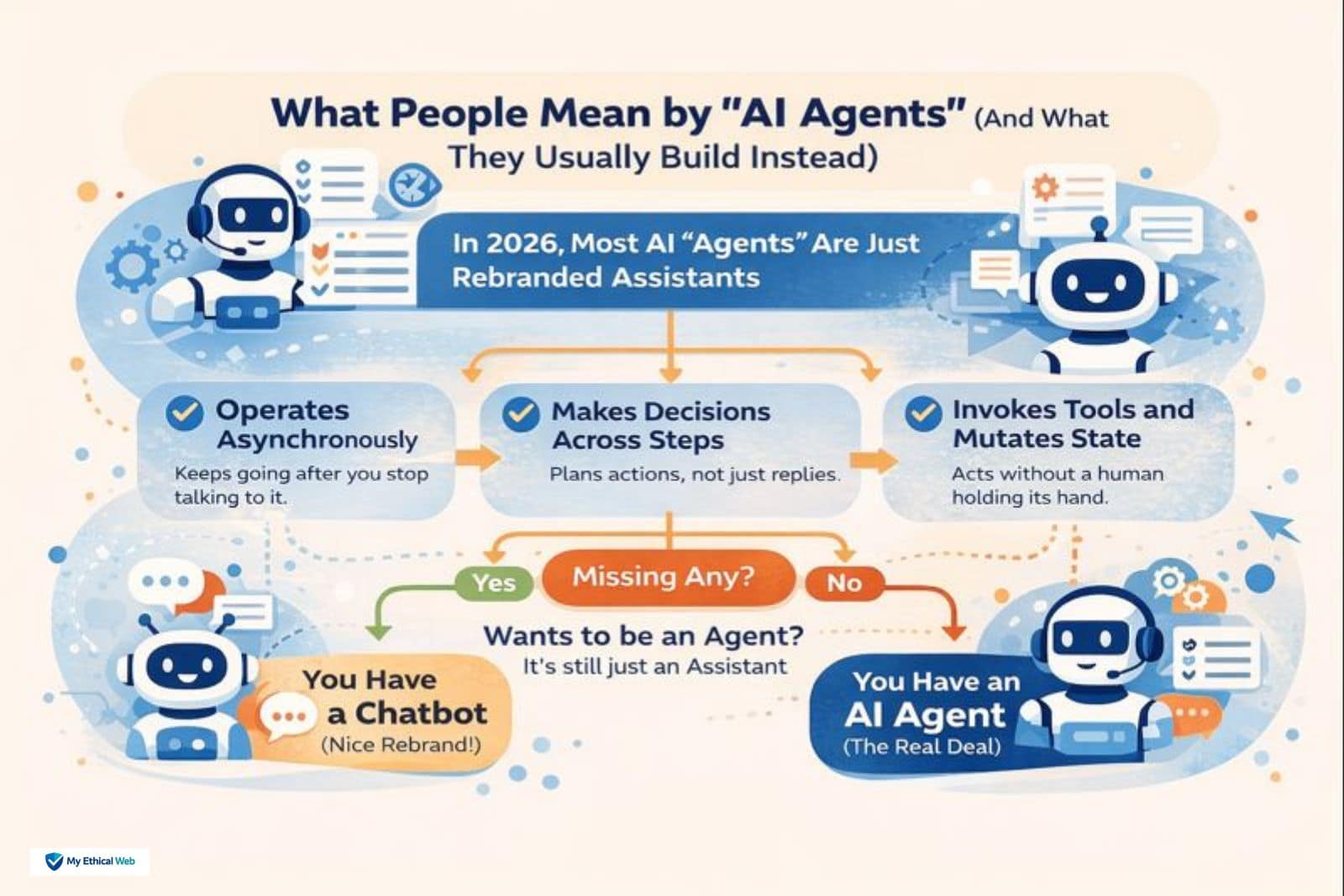

What People Mean by “AI Agents” (And What They Usually Build Instead)

Most products marketed as agents in 2026 aren’t agents. They’re assistants with better prompts and a longer memory window.

An actual agent has three defining traits:

-

It can operate asynchronously (it keeps going after you stop talking to it).

-

It can make decisions across steps, not just respond.

-

It can invoke tools and mutate state without a human holding its hand.

If any one of those is missing, you don’t have an agent—you have a chatbot that’s been sent to a rebrand workshop.

Actually, that’s not entirely fair. Some of these systems want to be agents. They just never crossed the line from “helpful” to “responsible.”

The Ugly Truth About Autonomy

Autonomy doesn’t fail loudly.

That’s the part vendors don’t emphasize.

A chatbot says, “I’m not sure.”

An agent says nothing—then confidently calls an API with the wrong payload, writes bad data to a system you trusted, and politely logs “success.”

No alarm. No exception and no red banner.

We found one of our failures three weeks later, not because monitoring caught it, but because the cloud bill spiked and someone asked why a background workflow was calling the same enrichment endpoint 47,000 times for records that no longer existed.

That’s not an edge case. That’s the default failure mode.

Assistants vs. Agents (The Difference That Actually Matters)

The real difference isn’t intelligence. It’s liability.

An assistant gives suggestions. You’re still accountable.

An agent takes action. Now it is accountable—and legally, financially, operationally… that rolls uphill to you.

Once a system can act without waiting for permission, you are no longer “testing AI.” You are operating software with judgment baked in.

That changes everything: architecture, governance, audit trails, rollback strategies, even how you sleep at night.

Where Teams Keep Crashing (And Yes, This Is Frustration Talking)

We keep seeing the same train wrecks. Giving agents tool access without guardrails. Treating them like “smart chatbots” (they aren’t). Letting vendor marketing departments define internal architecture. Shipping autonomy before observability. Assuming logs equal understanding. Assuming retries equal safety and assuming someone will notice if it goes wrong. No dashboard warned us. We just found out when the bills arrived. Or worse—when a customer did.

Pause. Breathe.

Then reread that paragraph and count how many apply to your current roadmap.

A Short Failure Story (Because This Is Where the Real Learning Is)

We deployed an agent to handle background reconciliation between two systems. Clean use case. Low stakes. Or so we thought.

The agent hit an intermittent timeout. Instead of failing, it retried—politely, endlessly, and slightly out of sync with the upstream data. Every retry was “successful.” Every result was wrong.

Nothing crashed. Nothing alerted.

Three weeks later, we discovered that reconciliation was technically “complete” and functionally useless. We rolled it back, rewrote the logic, and—this part matters—added a human review gate.

It hurt. It also saved us from repeating the mistake at scale.

So… Should You Build an Agent?

Here’s the contradiction again: agents are inevitable. They’re also optional—for now.

You should seriously consider agents if:

-

Your workflows are long-running and asynchronous by nature.

-

Human-in-the-loop checks already exist and slow you down.

-

You can afford real observability, not just logs.

-

You’re prepared to say no to autonomy in specific edge cases.

You should absolutely not if:

-

You’re replacing a simple if-then flow.

-

You can’t explain what “success” means at each step.

-

You don’t know how to undo an action once it happens.

-

You think “the model will figure it out.”

Absolutely not.

Giving an autonomous agent control over something like billing, cancellation, or customer permissions without layered safeguards isn’t innovation. It’s a future apology.

The Part No One Likes to Admit

Agents don’t reduce work. They shift it.

You trade manual execution for:

-

Design rigor

-

Failure modeling

-

Guardrails

-

Audits

-

Governance

If your organization avoids those things already, agents will amplify that weakness, not fix it.

And yet—done right—they’re incredible.

That tension doesn’t go away. Anyone who tells you it does is selling something

FAQs

Q. What is the main difference between AI agents and chatbots?

The main difference? Autonomy. Chatbots answer. Agents act. That’s it in theory. In reality, it means a chatbot waits for you to type. An agent keeps running, making decisions, and calling tools even when you’ve walked away. Powerful? Yes. Risky? Absolutely.

Q. Are AI agents better than chatbots?

Better… depends. Agents handle multi-step tasks across systems. Chatbots just respond. Most teams pick agents thinking “more AI = better.” Usually, that’s a mistake. Agents add autonomy—and with it, failures you don’t immediately see.

Q. When should you use an AI agent instead of a chatbot?

Only when action matters. If your system must update, reconcile, or act without constant human review, that’s an agent. Otherwise, stick with a chatbot. Seriously—most workflows are fine without autonomous behavior. The hype makes you forget that.

Q. Why do AI agents fail silently?

Because they can. A chatbot will usually say, “I don’t know.” An agent? It executes, thinks it’s correct, and often isn’t. You only notice weeks later when data is off, bills spike, or a customer complains. It’s subtle, and that’s the problem.

Q. Are most AI tools in 2026 really agents?

Not really. Most “agents” are assistants with better prompts and tool access. True agents run independently, remember past states, and plan across steps. Anything less? Marketing rebranded a chatbot. Yes, really.

Related: Rapid Web App Development in 2026: When Speed Stops Scaling